So the past few weeks I’ve been working on this really interesting project for creating a 3D mobile car environment in real time for teleop (and later autonomous movement). It starts with calibrating a 3D laser rangefinder’s data and a monocular camera’s feed which allows me, after the calibration is done, to map each coordinate in my image to a 3D point in the world coordinates (within the range of my rangefinder, of course). So, any obstacles coming up in the real world environs can then be rendered accurately in my immersive virtual environment. Now since everything’s in 3D the operator’s point of view can be moved around to any required orientation. So, for instance, while parking all that is needed to be done is to shift to an overhead view. In addition, since tele op is relatively laggy, the presence of this rendered environment gives the op a fair idea of the immediate environment, and the ability to continue along projected trajectories rather than stopping for resumption of connection.

So, as the SICK nodder was taking some time to arrive, I decide to play around Gazebo, and simulate the camera, 3D Laser rangefinder and the checkerboard pattern for calibration within it, and thus attempt to calibrate the camera-rangefinder pair from within the simulation. In doing so, I was finally forced to shift to ubuntu (Shakes fist at lazy programmers) which, although smoother, isn’t entirely as bug free as it is made out to be. So, I’ve created models for the camera and rangefinder and implemented gazebo dynamic plugins within them to simulate them. I’ve also spawned a checkerboard textured box, which using a keyboard manipulated node I move around the environment. So this is what it looks like right now

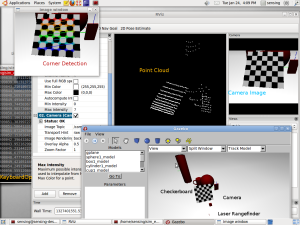

So here, the environment is in the Gazebo window at the bottom, where I've (crudely) annotated the Checkerboard, camera and Laser Rangefinder. There are other bodies to provide a more interesting scan. The point cloud of the scan can be seen in the rviz window in the center, and the camera image at the right. Note the displacement between the two. The KeyboardOp node is running in the background at the left, listening for keystrokes, and a sample HighGui window displaying the detected Chessboard Corners at the top Left.

Looks optimistic!

great work can u provide more technical details on that for example how u implement that calibration. easy tutorial or guidelines would be more appreciable.

Hey Alex

Thanks! So, first for the objects in Gazebo I made URDF models and for the checkerboard I created a png file and mapped that to a solid box as texture. Then, I used Gazebo plugins to simulate a laser rangefinder and a camera and I set their initial positions in the environment. I also used a small ROS node to move the board around using a keyboard (I control the individual rotation about the axes)

For the extrinsic calibration, first, have a look at the next post (and the image captions!) https://icoderaven.wordpress.com/2012/02/08/pnp/

Now, what is actually going on is that both the camera and the lidar are looking at the same point in world space. What we need is a mapping between the two frames of reference, i.e. a transformation matrix consisting of a rotational and a translational component such that we can represent a coordinate in our lidar frame of reference by a corresponding coordinate in our camera frame of reference. To find out this matrix, we need to evaluate a set of point correspondences between the two frames, which is where the OpenCV SolvePnP method comes in (http://opencv.willowgarage.com/documentation/cpp/camera_calibration_and_3d_reconstruction.html#cv-solvepnp). From the published pointcloud I pull the information in a PCL (http://pointclouds.org/) point cloud. Then I create a 2D range image of the view, which is a method within PCL. Now, the points that I click on the range image can be mapped back to the corresponding 3D coordinate in my pointcloud. I then click on the closest matching points on the camera image and then create an array of these sets of 3D-2D pairs and send it over to SolvePnP to return me the required rotation and translation vectors. (I’m also sneaky and include camera calibration in this process – which explains why I use cvFindCheckerboardCorners (http://opencv.willowgarage.com/documentation/camera_calibration_and_3d_reconstruction.html#findchessboardcorners) method to evaluate camera calibration.)

Now after I have the rotation and translational vectors, I basically find the corresponding coordinates of all the point cloud data points in the camera FoV in the camera frame. For the points that do fall in the dimensions of the image, I just map their colours to the pointcloud data point, and then publish this new coloured pointcloud. Which is why you’ll notice in the third screenshot why the shadow region of the checkerboard on the ground also has a checkerboard pattern – the point is correctly mapped to a point in the camera frame, but since the image is 2D and the checkerboard is covering that region, it faithfully maps the checkerboard colour to the 3D datapoint.

I would have released the code as open source, but unfortunately company policy forbade me from disclosing it. If you have any more questions, feel free to ask me here.

Thanks for replying Kumar. Actually i have 2d laser range finder i.e sick and camera mounted on pioneer robot in gazebo. i placed checkerboard in environment and i can move my robot to change the position. Now what i still can not understand is that how these two frames are related for example from my camera i am seeing a 2d image in pixels and from my laser scanner i am given a 2d laser scan with FOV 180. do you have any idea that how it will work for 2d scanner not the point cloud. since camera doesnt see for depth how these two reference frame are mapped with each other. Again if you can provide the exact functions in opencv will be more than welcome.

Thanks alot

Regards

Hey Alex

So, for what you want to do, you would only be able to correlate points in the plane of your SICK. To be able to correlate the points, your camera and the SICK should not be on the same plane. Visualize this as a flat sector spreading out parallel to the ground that you shall be able to see in your camera 2D image. After the extrinsic calibration, you shall be able to predict the depth of the corresponding region in the 2D image based on the relation you obtain after calibration.

This paper http://ieeexplore.ieee.org/xpls/abs_all.jsp?arnumber=1389752&tag=1 does exactly what you want to do, and I recommend going through it to get a better idea.

I think you can still use solvePnP for finding the relationship – just provide the height of the SICK scanning plane as the Z coordinates for your laser scan points. Essentially, what you would be doing is figuring out which line of pixels corresponds to which line in the laser scan, and send these pair of points to solvePnP.

Hope that helps!

Hi Kumar,

sorry to bother you again. i went through the approach proposed in the paper but i am still unsure how to achieve this in opencv. for instance i first calibrated the camera and thentrying to use equation 3 and equation 4 manually to figure out the orientation and translation. i dont know whether i can do it through opencv or not? second thing the solution you posted above by using solvepnp what would be Y in this case for laser range finder wont it be 0?where Z=height of scanner, then how will be calibration possible if we are not giving any value for distance or depth? Any thoughts would be highly appreciable.

Thanks alot.

Hi Alex,

If you look closely at the proof in the paper, they state that for a point Pf in the Laser frame of reference, the coordinates are [ X Z 1 ] since they set the plane of the laser scanner to be Y = 0. Thus, your laser rangefinder returns would go in Pf.

Anyway, the authors have released their source code online in Matlab. http://research.engineering.wustl.edu/~pless/code/calibZip.zip Have a look at that to figure out the inner workings.